3D Artist Alexei Hatherley talks about the automated animation techniques and streamlined character design his team used to keep to schedule at high quality for a new kids TV series.

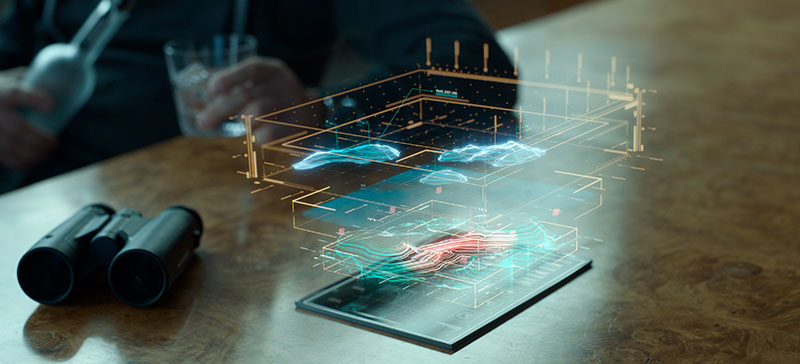

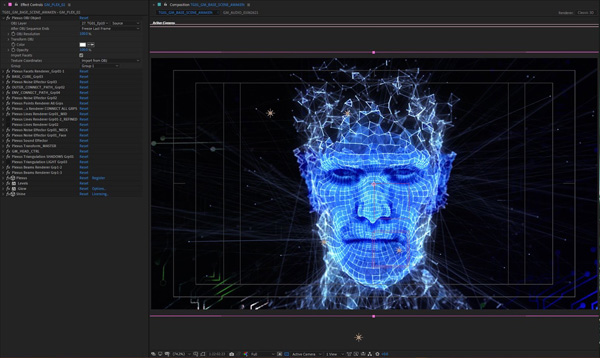

Preparing to render the Game Master with animation in After Effects

Ambience Entertainment’s live-action TV adventure series for 8 to 12 year olds ‘The Gamers 2037’ premiered on 9GO! in November 2020. The story concerns three young friends, determined to overcome a Virtual and Augmented Reality Game, VAARG, that has gone wrong and started digitising and absorbing players.

The only way to release the Absorbed players is to beat the ‘unbeatable’ game, and the artificial intelligence that controls it. But those who try and fail risk becoming trapped themselves. Together, the three friends must work together to beat the game.

Ambience Entertainment’s work on the show included the facial animation of the digital characters inside the game – its relentless Game Master and two comical commentators called Maeve and Marvin. Between them, these characters deliver quite lot of dialogue that keeps the story moving, and meanwhile the artists faced the challenges of a limited budget, a small team and a short time frame with which to produce a 26 x 24-minute series.

Automating Animation

To help maintain the quality and consistency of the animation and meet their deadlines, the production worked with Speech Graphics, developers of computer facial animation software that automatically delivers speech animation and lip sync from an audio recording.

Speech Graphics software interprets moods from the sounds it detects in actors’ voices, using an AI model trained in the relationships between moods and facial expressions. “So far, Speech Graphics has mainly been used in games but it has some feature film applications as well, especially to get keyframing cycles started and speed them up,” said Keaton Stewart, the series’ Creator and Writer at Ambience. He and 3D Artist and Motion Designer Alexei Hatherley estimate that their team spent only about 10 percent of the time on facial animation that a traditional keyframed process would have taken.

The generated content can be integrated directly into an existing animation pipeline using a set of software plugins. To define in advance the mood he knew he wanted the Game Master character to express, Alexei used Speech Graphics’s SGX and SG Studio plug-ins, which aligned the system to suit their purposes and vision by customising the AI model.

Emotional Nuance

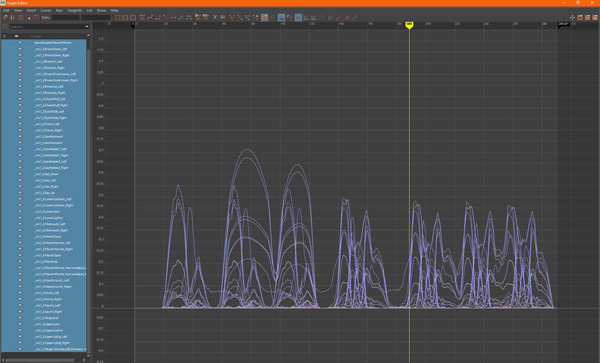

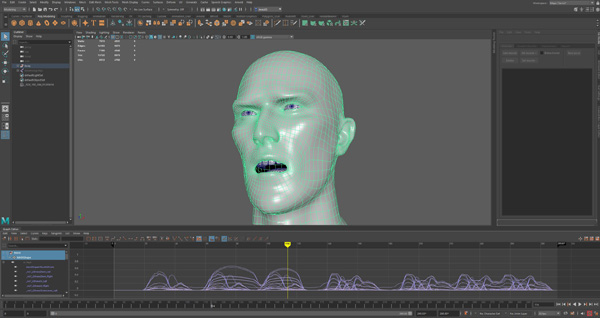

SG Studio defines the moods and range of intensity needed to produce the emotional nuance you envisioned for a character. To match their pipeline, Ambience used the version developed for Autodesk Maya. Alexei said, “Since the Game Master needed to appear very serious all the time, we created a ‘serious’ preset mood in the SG Studio Maya plug-in to make sure he didn't raise his eyebrows or smile at all. The first step is to select various blendshapes from the plug-in's interface to create appropriate facial expressions, which can be saved as specific moods. Whenever these expressions are used, they activate the blendshapes you've selected.”

Selecting blendshapes

Alexei then sent Speech Graphics the rigged character as a Maya .ma file that contained information defining the geometry, lighting and rendering properties of the 3D scene. After processing, Speech Graphics sent a custom character definition file back to Ambience containing all of the rig re-targeting information and the Mood Library that the SGX software needs to automate the animation. “At this point markup can also be added to the transcripts if you intend to adjust the mood throughout the dialogue lines. Since our character didn't need so much variation across his dialogue, we could skip this step,” said Alexei.

“Step two is to run the SGX application to generate facial animation from your audio and transcripts using the customised character definition file. Step three is to import the generated animation files into Maya using the SGX plugin, which applies the generated keyframes to your character.

Hybrid Rigging

“We approached Maeve and Marvin somewhat differently. In their case, SGX was allowed to interpret the tone of the voice actors and automatically apply facial expressions that reflected what the character was feeling. If required, we could adjust those expressions manually afterwards, using keyframes in Maya.”

Ambience used a hybrid of blendshapes and facial joint rigs for their characters, since the software is capable of processing both. “The team at Speech Graphics can suggest adding or tweaking different movements to help generate better animation, and in our case their artists helped a lot by adding extra joints to our characters when required.”

Character Design

As well as animation, Alexei talked about designing these characters, who are quite different from each other and needed totally different approaches for their creation. The Game Master, an ominous floating digital head, takes its concept from as far back as the famous Wizard in ‘The Wizard of Oz’ to the more recent Zordon from ‘Power Rangers’.

“The Game Master needed to reflect the future of AI and mixed reality, anticipating that soon, digital games will be projected into our real space,” Alexei said. “We needed a way to make the Game Master feel as though he were crossing between both of those worlds. Features from Rowbyte's Plexus, a particle engine plug-in, really became the foundation for the Game Master's look, with the ability to break the 3D model apart and allow him to connect with his environment via protruding nodes.”

Plexus allows users to produce generative art from inside a host application like Adobe After Effects. Artists can create, manipulate and visualise data procedurally – as well as rendering the particles, they can create varied relationships between them based on parameters using lines and triangles. As the workflow for Plexus is very modular, no two sets of configurations and parameters are alike, giving distinctive outputs each time.

Constraints and Expectations

CG artist Brylan Stewart was responsible for the two game commentators Maeve and Marvin, starting with just a few weeks for quick character ideas. "I was given pretty strong direction on Marvin, tending toward a gorilla or gorilla-like design,” he said. “As reference, I started with Winston from the game Overwatch but he had to have a cockier look, like a cool kid at school. I had a few ideas about adding a crown or a hairstyle, like Johnny Bravo, but there were restrictions on the poly count and time constraints, and so I settled on a silverback gorilla as a reference point.

“I was asked to design Maeve with a kabuki mask in mind, which was an exciting idea. The main inspiration for her look was Drift from Fortnite, who wears a kabuki fox mask. After working through some issues on the facial expression that such a design would cause, we went with a more organic look.”

When it came to their bodies, everyone liked the idea of power suits similar to those in Power Rangers, to which Brylan added a few bulkier parts to create a better silhouette. Tron, of course, was a big inspiration for the texturing of the characters. He said, “I used layers of digital designs to colour the suits and create the look. With Maeve I played a lot with the tattoos on her face, evolving through some softer Egyptian designs but ending with an edgier, hard line."

Rendering Workflows

At render time, the team used two different workflows to accommodate the details of the different characters. Alexi said, “Since we wanted the Game Master to have broken geometry that could be further animated inside After Effects, we exported the animation as an OBJ sequence and ran it through Plexus to achieve that specific look.

“The Maeve and Marvin scenes run to around 40 minutes across the series, so rendering through Maya was not going to be possible given our time constraints. Exporting the pair of them as an OBJ sequence, and running them through Video Copilot Element 3D to render out of After Effects, gave us the look we needed and significantly reduced their render time. For example, rendering a two minute Maeve and Marvin scene out of After Effects with Element 3D only took us two hours, whereas rendering through Arnold in Maya would have taken 4 to 5 minutes per frame.

www.ambienceentertainment.com