Framestore’s motion capture and light played fascinating roles in the

production and post of the science fiction fantasy ‘Jupiter Ascending’.

Framestore Brings Light, Life & Motion to 'Jupiter Ascending' |

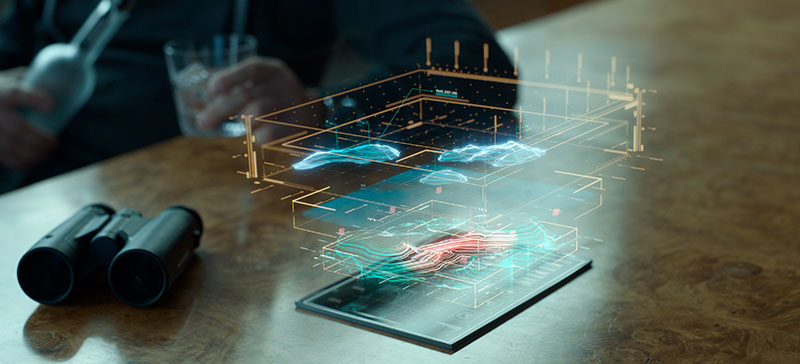

| Framestore's London and Montreal teams delivered over 500 shots for the science fiction fantasy movie ‘Jupiter Ascending’, producing diverse visual effects from alien character creation and animation, digital doubles, space environments, space ships and exotic interiors, and many simulations and fluid and explosive effects. Digital Media World spoke to members of Framestore’s animation and motion capture teams, lighting TDs and FX artists about this project that took nearly two years to complete. |

|

|

AliensIn pre-production, Framestore focussed first on development of their two alien creature characters, the Keepers and the Sargorns. The Keepers are sneaky, agile little aliens who can confusingly slip in and out of view as they leap about, bouncing off the walls. The Sargorns, in contrast, are threatening, reptilian characters standing two metres tall. A team of animators carried out animation tests over a six month period at Leavesden studios, and helped the production plan the sequences the creatures appeared in. Performance capture was extremely useful in the animation of both the aliens and various digital doubles Framestore created. It was carried out mainly as movement exploration, guiding and informing the dynamic action sequences, instead of true motion capture animation. For example, when planning out a sequence in Balem’s DNA laboratory when the hero Caine flies in and begins shooting at a pack of Keepers, actors were harnessed on wires and fitted with tracking markers. Their actions were captured and the resulting motion data was then retargeted to models and supplied to the previs teams at Framestore and at The Third Floor, helping them decide how to build up the action. Individual animations could be merged into one scene to figure out how to block the action. Meanwhile, the animators used the same motion capture as a jumping off point for keyframing. |

|

Twitchy InsectsAnimation leadin London,Michael Brunet, described developing the Keepers. It took quite a bit of time and various techniques to nail the Keepers’ behaviour. “At first, they were going to be more logically motivated and less creature-like than they are in the movie,” he said. “As the story and previs developed, however, the production wanted them to be spookier, cling to walls and even shift in and out of view. They also didn’t depend on typical walk or run cycles – we identified and practised the key moves ahead of the shots arriving, and handled each shot as a performance. “Because they had so little flesh or fat to work with, and joints without the usual human constraints on rotation, they required a fair amount of shot-fixing. Our riggers went in afterwards to adjust certain areas by letting the joints reverse themselves very quickly, snapping their arms back in a violent, twitchy way, like insects.” While they were completely keyframed with no true mocap used, the animators could start with the captured reference of themselves acting out more human-like, recognisable moves, and of the stunt actors for more alien movement. Mocap-Previs WorkflowMotion capture lead Gary Marshallin London said that although they couldn’t capture truly extreme moves directly, with the real-time review, solving and rendering techniques the capture team was using, the team could iterate repeatedly through variations of the actual moves. “The actor could watch his performance retargeted to his character’s model in real time and see the effect of his moves, translated to the creature’s proportions,” he said. |

|

|

Painting InvisibilityThe Keepers have another quality that makes them special, an ability to duck in and out of view with a subtle sliding effect. FX supervisor Andy Hayes said, “This invisibility effect was created by placing lots of geometry over the Keeper's body. These pieces tracked with its movements, and then rippled in rotation to give the impression of a flowing, concealing form of energy. They were rendered with an iridescent shader to add a variety of colours cascading across the surface. “In shots where the effect is brutally turned off - such as when they are shot - we developed a method to control the opacity of the cubes in an animated dissipating fashion. Typically we would paint maps onto the Keeper to specify where the propagating failure would start and then added parameters to control its speed across the body, because the reveal was often heavily art directed.” |

|

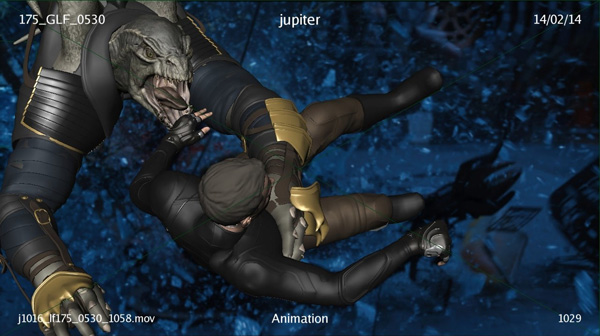

Dual NatureThe Sargorns had to be approached differently to the Keepers, not only because they were physically so different but because one of them, named Greegan, took an important role in the story. These creature characters are powerful, reptilian and possess a dual nature. Although they are intelligent, speak and communicate with people and stand upright, in an instant they can flare into a rage, dropping to the ground to fight like an animal and flying on their great leathery wings. Michael Brunet said, “Portraying Greegan involved rolling two characters into one. For his more human scenes, the actor Ariyon Bakare was performing on set with the rest of the cast, who we emulated in every way when animating the CG model. His performances literally defined that character - from the way he held himself and moved to how he addressed the camera and the live action characters. “Having him there in all scenes helped maintain consistency, and the motion capture helped us a lot for posture and timing, although the proportions of the model were too different to allow direct retargeting – Greegan has very long arms, plus heavy, highly articulated wings. The wings were quite tricky to handle, and we needed to closely co-ordinate the wing beats with the rest of the body moves. Their large size also made it hard to compose the shots effectively.” |

|

|

Dinosaur Face RigThe Sargorns’ head, jaw and teeth design gave the animators a separate set of challenges. Early on, they advised the production that the head design in the concept would need some modification to work effectively for speech, but the directors were keen to keep the design as it was. The animators then focussed on adding movement in the throat to show how difficult it was for the Sargorns to speak normally, and that the rumbling guttural voice came more from their chest than the mouth. “Nevertheless, a full face rig was built to move the nostrils and fleshier sides of the mouth. The area to the front could only move a little to avoid interfering with the teeth,” said Michael. “The Sargorns’ tough, thick scaly skin introduced further limitations on expression. We avoided stretching moves and kept the face tense and taut, like a dinosaur’s face might look. Especially when Greegan was talking, we had to avoid over-animating.” Headcam & StiltsOn the motion capture side, Gary Marshall described the capture volume set-up. “We worked with Ariyon Bakare in a volume measuring about 50ft x 30ft, where we had 16 Vicon T40 cameras arranged in a roughly rectangular grid. We focussed on certain aspects of his performance and one was the facial animation, using Vicon’s facial capture system Cara, a headcam fitted with four cameras in a recording 720p video. We put special makeup on his face, which the recorded video reveals as points in the Vicon Blade solving software, as the basis of an animated mouth. Any time Aryon was performing on set, we had the headcam on him capturing data. |

|

|

For some of his scenes Ariyon also wore stilts which were sprung to provide some bounce in his walk. It took him a while to get used to moving around on them, but it was also a challenge for Gary’s team to translate the stilts into leg extensions, requiring some unusual character rigs in order to solve for that motion. The stilts also affected the range-of-motion calibration done at the start of each capture session. Like the Keepers, he could watch himself moving as Greegan in the real-time playback, which was very helpful because he was portraying such a large, heavy character. Jet BootsThe hero of the story, Caine, was a live action character, but his ability to fly around on jet boots made full or partial CG replacements of his body essential at times. As much as possible during the movie, we are seeing the live action actor or stunt replacement negotiating ramps built on the stage in a pair of inline skates. In post, the ramps were removed, and CG jet boots were added. “Skating made a good approximation of the desired motion, which the directors described as similar to surfing, although the best moves resulted from skating along a flat 2D plane. As soon as the ramps were inclined too much, the skater was forced to change his leg action to less flowing moves,” Michael Brunet said. “We would analyse the footage in the plates and then decide where CG replacements were going to be necessary, instead of trying to plan ahead.” |

|

|

|

Skaters and Surfers“In such cases, we referenced an ice hockey player’s moves for cornering and crossovers, and back to the surfer for slaloms and low spins,” said Michael. “We figured out how the character Caine would do this or that move, if it were possible, taking both his personal style and the needs of the story into consideration.” The motion capture team contributed to Caine’s CG replacement animations by building up a skating volume that the actor Channing Tatum used to test his moves. Gary Marshall said, “We motion-captured a French roller blade champion as well for many of the ‘skating’ scenes as reference for the animators. “For a scene set in Chicago where we see Caine leaping between buildings, a big green screen and treadmill arrangement had been suspended over the street, on which Channing performed on wires. Not surprisingly it was a challenge to get a good performance. Keyframing a digital actor, using the motion captured more safely on the ground as reference, was a lot more successful in the end.” |

|

|

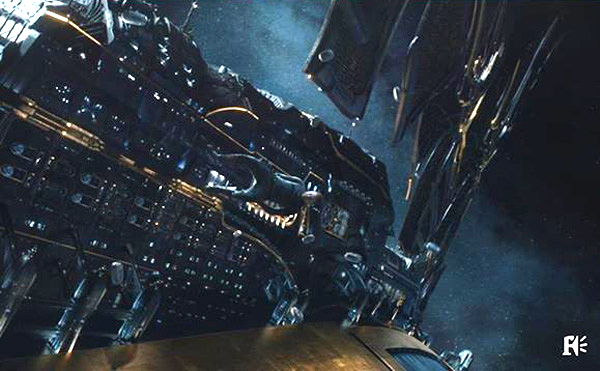

Ncam Camera TrackingFor on-the-fly rendering of live action with CG elements, the Ncam camera tracking system, which records the camera’s position and orientation in real-time, was on set with the production as well. “For the fight scene, where we had an actor geared up as Greegan, the Ncam technician rendered out the mocap data with the live action to show the production and VFX supervisor Dan Glass how a CG and a real character performing together would look,” said Gary. “We spent a couple of days working this way. Seeing a CG/live action composite within our shots was very useful for visualising that sequence. It helped block the animation, position the camera and plan eyelines, and allowed lots of iteration. I also had a chance to see our team’s mocap work go into previs, and come back out again for the Ncam team to use on set to do their real-time rendering for the shoot – which was great!” A Billion PolygonsFramestore created several space ships for ‘Jupiter Ascending’. The most complex was the Titus Clipper, three kilometres long and made up of about a billion polygons, making it larger than the International Space Station Framestore built for the movie ‘Gravity’. It is also more detailed than the Space Station because it functions as a floating city. Part of the challenge was giving the model enough complexity to sell the scale while still looking functional. Therefore its construction involved a combination of techniques. Lighting TDin MontrealBruno Laflammesaid, “The general strategy for the Clipper was ‘per shot’ adaptation - designing, modelling, texturing and architectural lighting. An overall first pass of it had been done in modelling to produce the general shape, but because we had decided that upres-ing shot-by-shot was going to be the approach from the start, the asset built for the Clipper wasn’t signed off before shot production, as we do in a normal VFX pipeline. That is, updates to the asset needed to constantly evolving as we received new plate turnovers.” |

|

|

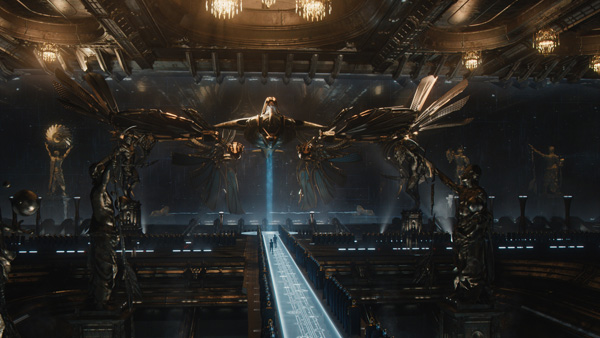

Speeding through SpacePositioning the Clipper in space and suggesting its speed was another trick as there is no debris floating in space, no depth or fog helping the viewers’ eyes produce the illusion of distance, so the artists were relying on traditional camera movement techniques to help the viewer intuitively feel these distance between the elements. Camera shakes, short distances travelled by the moving camera, surrounded by many fast travelling elements, and other similar tricks were lending a sense of infinite scale to the layouts. We first see the ship in flight, rushing along and emerging up through the icy rings of a planet. The team found this look to be another interesting challenge. Bruno said, “The director's brief was leading us away from building these ice rings out of smooth mist or any other smoke type of volumes. Instead, it was meant to be a crazy amount of small blocks of ice. The scale of the scene pushed us to split that massive simulation into more than 25 smaller pre-processed ones. Each of them was generated in Houdini, using a physically based fluid simulation system. For the LookDev and rendering side of it, we were quite satisfied to let Arnold deal with rendering that huge quantity of objects as a single beauty pass.” Intergalactic InteriorsThe Clipper comes to rest at Balem’s palace in a huge dock, a gleaming environment built with grand architecture and lit with hundreds of individual lights. The live action, shot on a single stage surrounded by green screen, occupies only a small section of it.Mathieu Bertrand, also alighting TDat the Montreal studio, said, “Because the dock was going to be built out as a full CG environment to use in all shots, instead of per shot digital matte paintings, we dealt with each of the lights individually, once, and grouped them by name according to which region of the dock they were lighting, so they could be easily selected. Furthermore, using CG lights gave us the correct, physically plausible reflections and light bounces off the reflective surfaces. |

|

|

“But I think the inspiration really came from photochromic lenses, the type that can darken when exposed to certain types of light, for example, ultraviolet radiation. When they are not exposed, they behave like standard, transparent glass. To maintain a glass-like feeling, we generated CG proxies of the actors to show them reflected on the floor,” he said. “The other challenge was reconciling the different lighting moods within the same frame. The Lab was shot under a cool light while the boardroom is pretty warm. We used the glass floor as a light filter that absorbs certain wavelengths of the light - bit like your sunglasses. In a way, our approach was to recreate a material that met the director’s specifications while keeping the physics plausible.” |

|

|

Warhammer Rescue MissionAt a point in the story when Caine and Jupiter’s protector Stinger need to rescue her from the Clipper, they steal two one-man fighter ships called Zeros from an armoury. The Zeros protect themselves by each releasing a swarm of Warhammers, about half a million mines zooming out ahead of them on their way to the ship. With so many Warhammers to dodge between, plus the explosions and debris, choreographing the elements so that the audience could follow the action visually was essential and affected the artists’ decisions on looks and animation. “Making the sequence readable was a big challenge,” saidCG Supervisor Andy Walker. “There were several phases of development. The choreography was massively affected by the collective behaviour of the Warhammers. In the first stage of blocking, a style of collective movement was established and set pieces were designed using that style of motion to create key moments in the sequence. The set pieces were then arranged to produce a crescendo of jeopardy as the sequence progressed. This cycle was repeated until we had a style and sequence everyone was happy with. |

|

|

| Above: ‘Zero-G’ may be familiar territory for Framestore, but this sequence from the film was shot differently to ‘Gravity’. Instead of moving the lights around with LED panels, actor Channing Tatum was moved on a robotic arm against green screen, which meant he was suffering under gravitational effects and lookedstressed- but that actually worked well for the story. The rig was removed and Channing was rotoscoped out and placed into the digital environment, or in some cases they had to replace everything digitally from the neck down to get the performancerightfor zero gravity. |

|

Explosive Choreography“The animation team would also lay out a first pass of hero explosions, and then aim the guns where they wanted to create more. Everything would then be pushed through the FX pipeline to add the procedurally triggered explosions - and then through lighting to see how it looked. It was very important to cycle everything through the pipe as it was very difficult to gauge the final effect from an animation submission. We gradually learnt the visual language of the sequence and were able to make good decisions in animation as to what would work.” Preventing the Warhammers from looking flat, or even invisible, was a huge problem in the dark environment. They tended to all be either ‘on’ or ‘off’. This was solved by adding a very slow, modulated wave of rotation through the squadrons, so they caught the light and glimmered like a shoal of fish. This was the key to bringing them to life. The explosions were then used as backlighting to help differentiate the Zeros from the Warhammers, all followed by debris, debris and more debris to make the stereo effect work dramatically. “Overall, it was a real combination of all departments working together to make the whole thing come together,” Andy noted. “A small change in animation could cascade through the pipe and make a completely different shot, so it was important to have control over everything and to be able to bring back explosions from previous versions, or even a tiny piece of debris that you particularly liked. But at the same time you had to embrace the chaos of destruction that came with each new animation and subsequent destruction simulation and mould it into something that works to tell the story.” www.framestore.com Words: Adriene Hurst |