AEAF WINNER - FEATURE FILM VFXMPC’s VFX supervisors Charley Henley and Richard Stammers describe their work on the Prometheus, Juggernaut and the cold, beautiful world the mysterious Engineers left behind.

|

The visual effects team at MPC started work on ‘Prometheus’ in late 2010, earlier than most facilities due to the role of Richard Stammers, based at MPC, as production VFX supervisor. During preproduction, Ridley approached the team with a large set of concept drawings prepared by the Art Department at his production company Scott Free, mainly depicting the planet’s surface with the spaceship Prometheus travelling over it. “From there we moved quickly into previs, quite early, with Ridley working with us here at MPC in London,” said MPC’s VFX supervisor Charley Henley. “He would come into the office to make drawings for us, while we examined the angles in the art work and recreated some of the cameras. |

|

|

|

|

The first scene they put together was in fact the climactic Juggernaut crash sequence, when the colossal alien ship falls back the planet’s surface and rolls over the landscape, looming over the two astronauts from Earth. Ridley was on hand the direct the previs on this critical sequence, devising new shots as he went, which the team would cut together. “Although it was actually one of the final scenes, this work helped set the stage and served as an introduction to the rest of the project. It involve d a post-vis phase as well, after the shoot, so that very early in post-production, within a few weeks of the cut, we were looking at the whole scene including looks and all of the action and getting sign-off. Doing this sequence first meant we had time to push the look and sell it, which in turn drew Richard and Ridley into what the movie could and would become. From there we could focus on improving the quality.” |

|

|

|

|

|

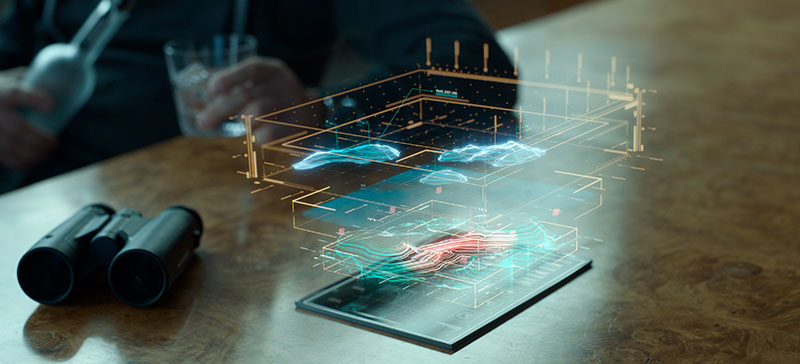

Shots and Vendors MPC, because they worked on the environments and both ships, ended up with the largest number of shots, while the intensive creature work the film demanded fell to Weta Digital. The need for a sophisticated design team to handle the holographic representations in the story, including the holotable and astronomical mapping – suggested Fuel VFX in Sydney as another vendor. Ridley especially favoured their initial designs. The fourth main facility was Hammerhead in LA, who took over the holographics in character Peter Weyland’s briefing and Charlie Holloway’s research presentation. |

|

|

|

|

|

|

|

Perfect Light, Perfect Canvas “It wasn’t due to any detail on the ground or in the landscape. It was simply the look overall that it gave to the images as a base for the digital work. The skies, the light and the nights lasting little more than two hours were great advantages. Low sun, overcast days with a small amount of directional light also worked very well for the DP Dariusz Wolski.” Charley explained that prior to the choice of Iceland for the live action footage, Ridley had printed off an image of Wadi Rum from the Internet showing a mountainous valley that he drew the alien pyramid structures into. “This was his first, potential image for how the planet surface should look. We researched Wadi Rum further, using Google Earth, and found imagery to serve as a base for our mountain backdrops,” Charley said. “We also sourced elevation maps with enough information to create a basic model of the valley and rocks around Wadi Rum in Maya. From there we could previs flybys through this location. Clearly, it was a pivotal environment for us to lock into at that early stage. Meanwhile, the production continued scouting for shoot locations, still considering places in England or the idea of relying partly on built sets.” |

|

|

|

|

|

Helicopter Photography “The green of any plants that might be showing was keyed out, because the planet wasn’t meant to have any vegetation at all, and the sky was often enhanced. Wherever the spaceships were shown on the ground, the landscape had to be manipulated, of course, and whenever we were using the Jordan footage for flybys, we had to change the look of the desert sand and sky, using either CG or elements from Iceland - stormy skies, lightning and so on - to create the alterations. “On the other hand, the Jordan plates were mainly used to base the giant rock mountain terrain upon. In any case, the primary goal was to use as much photography as possible, instead of venturing into fantasy. It was to be an earth-based planet. With both sets of plates to use as source material, as soon as the spaceship leaves the space environment and approaches the planet to land, the images viewers see are essentially the live action plates, albeit enhanced.” |

|

|

|

|

|

Playing with Scale Having sourced the necessary geography and elevation data from Google Earth that they had used to build Wadi Rum, the team created a layout pass by working together with Ridley in 3D to design the landscape. He was keen to scale up the mountains to about three times larger than reality. They also experimented with different layouts for the domes and the precise spot for the ship to land. Not only did he enjoy working with them directly on the virtual cameras but the sessions also meant they had shot design information quite early and could scale other elements accordingly. Going forward, they made sure that the size of the aliens’ pyramids, the ships and so on were all designed to be scalable. “Ridley’s sense of composition is such that he’s willing to cheat sizes of objects to achieve the looks that he wants. Therefore, while we would size up the valley geography, putting the camera in a particular position might also mean adjusting the sizes of elements for dramatic effect,” Charley said. |

|

|

|

|

|

|

|

Rocky Libraries This provided a library of mountain textures plus lighting, applicable to any shot they had to build out. If the foreground characters had been shot in Iceland with sun shining from the left, they could match it with mountain textures from the library. The photographically projected environment based on original lighting was always going to be superior to relighting digitally. The actors occupying the midground were typically captured as a part of the Iceland plates, so about half of the exterior action shots were shot in Iceland itself. But in some settings, for example around the garage area, they were shot on the back lot at Pinewood Studios surrounded by a huge green screen. “In these cases we rebuilt Iceland as well,” said Charley. “again exaggerating the amount and size of the rocks, all based on the local volcanic formations that we called ‘pinnacles’ lifting up from the ground. These environments are mostly fully digital to match specific lighting scenarios. A scanning team also collected information for us to model a library of rocks, as we had done for Jordan.” Although the film was full of digital effects, the goal was not a feeling of fantasy. Referencing real events, motion, looks, light and textures was important to Ridley, as it was to MPC. Therefore, the quality of the 3D compositing, all done in Nuke, relied heavily on relating all elements to something real. For example, real 2D elements were always added to explosions, including that final explosion of the Prometheus itself – real debris, fire and smoke elements. |

|

|

|

|

Ship Building These gave them a sound basis for design style and mood but as the camera moved in closer, huge areas of the ship needed extra detail, such as the panel lay out or detailing on the aerials. The ship was eventually seen from so many angles that completing and balancing it was a mammoth task. One of the senior concept artists Ben Procter helped on some ideas and real space craft were researched, the space shuttles for example, for colour tones – ‘NASA white’ was the preferred shade – and materials. They studied classic space ship designs as well, ranging from ‘2001: A Space Odyssey’ to Nostromo from ‘Alien’, from which useful photo reference was available for panel detailing. Their ship work focussed on the exteriors. The interiors were built sets. The bridge was a large set built on a moveable gimbal to create rocking effects as the ship landed. The very wide cockpit windows were all shot against green screen which MPC fitted with glass, complete with reflections and dirt, and replaced with star fields representing the view into space. |

|

|

|

|

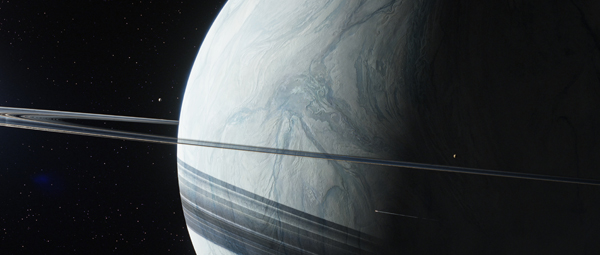

How should space look? “NASA-base imagery was a starting point, and photography of real planets,” Richard said. “The ‘gas giant’ type of planet with surrounding moons seen in the film was based on images of Saturn - we took the texture of one of its moons and rings as influences. “The look of the star fields was also concepted from real images. We previs’d the camera moves around the Prometheus to capture the storytelling points, suiting what both Ridley and the editor Pietro Scala wanted to achieve and creating the right mood for the space ships. Especially for the early reveals of the ship, we previs’d options that were both original and worked well in 3D. Because the whole film was shot stereoscopically, all VFX shots had to be produced in stereo.” Agile Spaceship |

|

|

|

|

“We tried to work this kind of action into the take-off and landing but of course had to tone it down to convey weight and scale,” said Richard, who felt strongly about animating the ship realistically. “The four massive engines articulate and rotate, to work as down thrusters and result in an action closer to the way a Harrier jump jet might land. The design was complex due to the articulating pieces, creating a challenge for the animation supervisor Ferran Domenich when balancing their motion to create the landing feet.” The Juggernaut represented a completely different, alien style of ship to design and build. While it involved certain elements of the earlier derelict ship from ‘Alien’, Ridley wanted it to become much larger and more detailed. A Russian artist named Güterlin, among others, worked specifically to give it a distinctive style – which Charley called ‘techno Giger’ – and gave the concept art a pass that resulted in a heavy technical challenge when the team tried to cover such a large asset with so much detail. Dangerous Detailing |

|

|

|

|

“We put a huge amount a detail into the model so that we could still use digital matte paintings for the extreme close ups to give it an extra finish. Much of the design work relied on ZBrush, but we had to constantly determine and fine-tune the balance between creating the shape by displacement maps, which was done as one pass consisting of textures calculated on render, or in the modelling in Maya’s physical geometry, or in a pass covering the overall detail. The render was handled in RenderMan.” Because the complete ship was so heavy, they needed to build three different versions of it at different levels of detail in order to maintain their productivity – using the low-res model for shots when the camera was furthest away and saving the high-res version with the matte paintings for close ups. Rendering was managed not only through their levels of details but, by using displacement, they could adjust the quality and time of render by changing the shader rate depending on what part of the ship was closest to camera. The Juggernaut was so large that often, only part of the ship was close. The rest was at a distance and so the shader rate could be fine-tuned across the whole surface. |

|

|

|

|

Calculated Smash The breakup of the ground as the Juggernaut emerges from the silo used a different technique, not KALI. It was modelled as a piece of ground that could be animated to open up. They could add effects on top of this, such as the dust falling from the edges. |

|

|

|

|

|

Charley explained, “On set, a practical set piece 20ft x 20ft was built for the character Elizabeth Shaw to jump across. The previs we completed for the sequence helped determine the size required, resulting in drawings and plans for the practical build which would be digitally extended in post at MPC. This was constructed in two pieces on a truck, so that it could be opened. “The action was shot at the Pinewood back lot and combined with the wider exteriors from the Iceland shoot. To help the cameraman in the helicopter to frame up his shots, they had marked out onto the landscape the circumference of the massive silo. It was just over a kilometre wide, of which the set of course only represented a small portion – just enough for Dr Shaw to perform the jumps in a safety controlled set-up.” They also had the plates showing her running across the Icelandic terrain and could use these textures on their extensions while animating the ground to open. On top of coordinating this imagery, they had the emerging ship to animate and composite, reinforcing the need for previs for timing and pacing. |

|

|

|

|

Stormy Weather “Because it had to be so huge, we broke it down into sections, starting with about 25, but in order to add enough detail across the sections we had to continue breaking down the area into more, smaller sections resulting in about 100 separate caches of storm. Fortunately we started on it early enough in the schedule to allow us to keep refining it to the end of the project.” The cloudscapes were another otherworldly and beautiful phenomenon developed from images shot here on earth – “We couldn’t go anywhere else,” Charley joked – some of which was based on time lapse photography captured in Iceland. It was shot mainly on the production’s RED Epic cameras in their stereo rigs, but they could also use the Canon 1Ds cameras they generally use for texture shoots, whenever they happened to see a beautiful-looking sky. 1fps was a suitable speed. “We were lucky to have some incredible skies while we were in Iceland. We used this footage to create fast moving skies but wanted to avoid a time lapse appearance, where clouds just appear and disappear in quick succession, aiming to find just the right speed and type of cloud. At times we were manipulating and warping the images during compositing to create enough internal motion, while maintaining the speed of the drift,” he said. |

|

|

|

|

Little Monster The whole sequence was first shot using the props, and when they received the cut they assessed the possibilities with Ridley to decide where they could effectively enhance the existing motion, such as the opening action of the head, or coiling into the astronaut’s helmet and into his mouth. The arm breaking needed more realistic muscle movement and the impression of a tighter grip, calling for digital replacement. The balance between its snake-like behaviour and strength, and its ability to move very suddenly was what made it interesting. Charley said, “Some of the animatronic versions were brought into our office to analyse and copy. It had been built with interior veins and muscle below a layer of semitransparent silicon, so we basically did the same. We scanned it and built the internal piece and then the skin layer, slightly wider, and simulated light travelling, bouncing off it and scattering in the same way.” |

|

|

|

|

Digital Dailies “A regular schedule of Cinesync conference calls was adhered to. By the end, 10 vendors were submitting shots, so organisation and scheduling were critical. VFX Editor Mitch Glaser started just after the shoot began to manage the constant turnovers. He would cut finished shots back into the edit following each of the editor’s cut changes, and notify the affected vendor. “Fluent Imagewas a company that helped to keep the production’s digital workflow under control for delivering and receiving VFX plates and shots to and from vendors. For example, to organise the digital dailies, as soon as the footage was shot on the RED Epics, they downloaded the RAW data and digitised it into stereo Avid media for editorial. When it came time to turn over shots, they all had RAW files stored on the servers. As soon as editorial requested a shot be turned over, they would use Fluent Image’s web interface to automatically send plates to the appropriate vendors. The service was very quick.” |

|

|

|

|

|

Editorial would upload an EDL to the Fluent Server, which would then transcode the original RED camera RAW files into a DPX stereo sequence and deliver that to the vendor’s ftp site. A single shot delivery only took about one hour to supply instead of days, and the service made adding frames to shots very quick also. Fluent continued to customise the server-delivery system throughout production. Sohonet was the fastest way for the vendor to receive files but otherwise ftp was used. “Reliance MediaWorkscarried out the plate triage for stereo alignment before shots went to the vendors,” Richard said. “This involved colour aligning, adjusting vertical offset, making not only a more comfortable shot to look at but also making them more accurate and straightforward for the vendors to work on. The adjustments are quite critical and save time when matchmoving and compositing. It also put a control on the level of quality in the stereo plates before the vendors tried to correct them themselves.” |