A paper presented at SMPTE 2022 Summit showed dynamic AI-driven methods that automate HDR production and the SDR-HDR-SDR round-trip for identical distribution to all screens.

Today while HDR live production is still being developed, HDR continues to present content creators with major challenges as they migrate from SDR production. A paper presented at the 2022 SMPTE Media Technology Summit discusses work now underway during the current transition period, that allows both HDR and SDR content to be creatively managed in production and delivered to viewers’ screens.

The paper, titled ‘HDR Production – Tone Mapping Techniques and Round-Trip Conversion Performance for Mastering with SDR and HDR Sources’ was presented at by co-writer David Touzé, System Architect for Research and Engineering at InterDigital, an R&D company focussed on mobile and video technology for devices and networks.

The writers believe that tone mapping, or luminance conversion, is an important means of HDR/SDR delivery. Their investigation has led to an understanding of how to use tone mapping to take advantage of HDR’s expanded range of colours and, above all, to the conclusion that tone mapping must be dynamic – that is, adaptive to each individual image in a production.

The SDR Legacy

By now, SDR production has undergone decades of development, equipment and expertise. However, content producers have had to make their story work within the limited contrast and colours of SDR colourspaces. HDR brings the chance to tell stories with greater creativity and flexibility, but practical obstacles remain for distribution. The wide variation in capabilities of the available HDR displays prevents viewing any HDR or SDR production optimally on any display, HDR or SDR.

The general solution to this issue is tone mapping, either tone compression, which maps from HDR to a lower-luminance HDR or SDR, or tone expansion, which maps from SDR or HDR to a higher-luminance HDR.

In terms of production, although producing HDR content is now the trend, earlier content still exists and lots of new content is still produced in SDR. Again, mixing feeds in both formats in production requires tone expansion for SDR content to match the HDR, and distribution may then require tone compression back to SDR without degradation for legacy devices.

Given this paper’s emphasis on live production, artificial intelligence (AI) is regarded as another key aspect of dynamic tone mapping, allowing image analysis and adjustment to occur automatically and in real time. Conversion must also be tunable, so that producers keep control of their aesthetic choices.

Ultimately, invisible, exact SDR – HDR – SDR round-trip conversion is required to preserve artistic intent for all content, especially for producers mastering both SDR and HDR sources, who also want to exploit the storytelling capabilities of an HDR stream.

The round-trip performance of an existing system, Advanced HDR by Technicolor, that has all of the above abilities, is analysed in this paper. Advanced HDR by Technicolor is a collaboration between Technicolor, Philips and InterDigital. The results demonstrate round-trip functionality that is useful in live TV and post, and makes sure that flexibility in HDR content creation and conversion doesn’t compromise the original creative intent of SDR sources. With wider application, dynamic tone mapping may become a creative skill similar to colour grading today.

Tone Mapping for Live

Many cameras, professional monitors, TVs and mobile devices lack the ability to capture and render the wider contrast and colour possibilities available in HDR video. David Touzé and his colleagues focus on tone mapping as currently the best way to handle those variations among HDR and SDR devices’ peak luminance capabilities.

Video itself is a source of variation. If all images across a project had a uniform luminance distribution, such conversions could be done identically, even mathematically, in a universal process. But most video content is a series of continuously varying images with details concentrated along variable portions of the luminance axis.

Furthermore, not all details captured in, for instance, a 4000 cd/m2 HDR project can be displayed on a 650 cd/m2 HDR display, nor can they be maintained in a calibrated 100 cd/m2 SDR stream. The basic question is, how to ensure that details important to storytelling survive as tone mapping is applied, wherever their placement on the luminance axis of the native HDR image happens to be?

Because the SDR displays that viewers now own must be supported for many years, a way to simultaneously produce HDR and SDR content is necessary. Currently, the main approach is to generate one HDR production and derive an SDR version automatically.

HDR Production and the SDR-HDR-SDR Round-Trip

This paper considers, however, that a final HDR program often combines different sources – live content from both native HDR and native SDR cameras, graphics generated in HDR or SDR, and commercials supplied as SDR. Therefore, since the resulting HDR program should be consistent from shot to shot, source to source in terms of overall luminance, contrast, colour saturation and consistency, conversion to HDR is needed using a tone expansion tool.

In a mixed-source-scenario like this, SDR-HDR-SDR round-trip processing becomes an issue because the SDR sources will have to be converted twice – once using tone expansion to convert to HDR for routing and switching, and once using tone compression to return to SDR for distribution to SDR devices. The processing needs to be imperceptible for two hard reasons. One is that commercials are typically SDR, and contractually required NOT to be altered. Second, the colours of logos, which carry the identity of a company, must be highly stable.

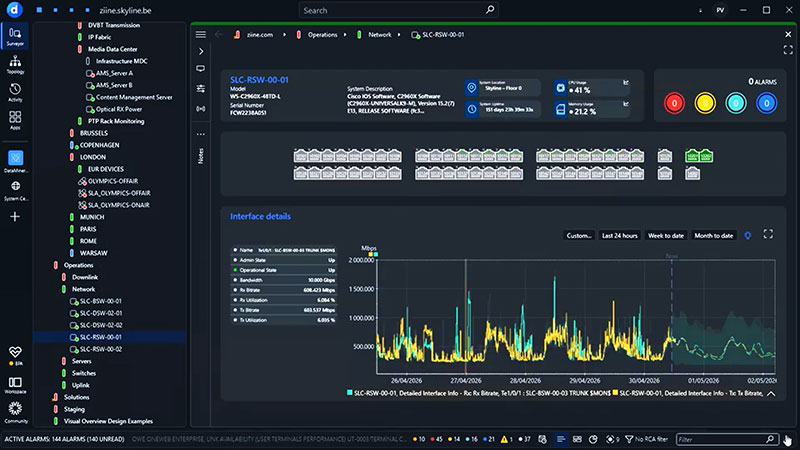

Static approaches to SDR-to-HDR conversion have been developed using 3D LUTs (look-up tables), and were used from 2020 in the production of major sports events including the Tokyo and Beijing Olympics. But relying on static 3D LUTs causes some concern because it feels arbitrary, and may limit options and creativity. A LUT converts the three-pixel coordinates of each pixel into another set of coordinates, mapping one pixel colour into another.

Though the conversion is straightforward, it means details must be maintained in both HDR and SDR during processing. Users would need to assign an unmoving HDR Diffuse White level as a reference point for the entire project, which is potentially compromising.

This HDR Diffuse White level was devised by the ITU as a reference signal level. It divides the pixels of a scene so that all the important details correspond to the luminance levels below the HDR Diffuse White level. Meanwhile the speculars, the very bright pixels, generally close to white and holding little detail, correspond to the luminance levels above it. Instead of a fixed value, HDR Diffuse White is most useful as a range of values, depending on the type of content (drama, sport etc) and the conditions (indoor vs outdoor, bright or dark), and it can also be subject to dynamic adjustments.

Another option is to design custom LUTs for different situations, but that means ensuring that everyone knows the correct LUT to use for each device in the production, and for reverse processing when setting up a round trip.

Dynamic Tone Mapping

Since, as mentioned, the position at which details concentrate along the luminance and colour axes will vary depending on the image and content, the writers of this paper believe that dynamic conversions can optimise mapping between SDR and HDR, preserving details where needed – either highlights or shadows or both – and allowing dynamic adjustment of the HDR Diffuse White level.

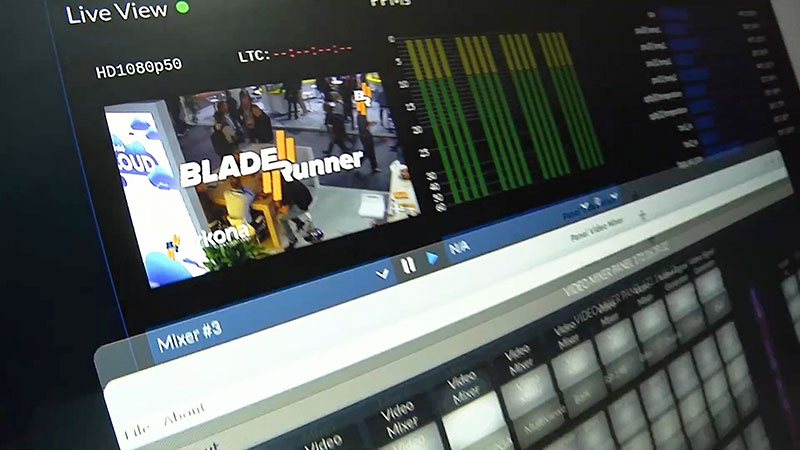

This kind of conversion – dynamic tone mapping – already exists in post as the work of colourists who make expert decisions about colour across an entire film or TV production. Live productions require real-time processing, also already done by video engineers performing camera shading or static 3D LUT conversions. Note that these jobs require a dedicated team member and, if adjustments are needed at each source change and tuning is reactive, the work may cause delays during the event.

Introducing an AI engine into the production environment addresses the need for speed and accuracy. It can analyse each individual image in real-time, make adjustments as needed, and requires little or no supervision. The result is dynamic, real-time conversion between SDR and HDR, automated but with the ability to be tuned up front to ensure consistency, and to match the particular production and its aesthetic.

Advanced HDR by Technicolor

Dynamic tone mapping, and SDR-HDR-SDR round trip in particular, places a complex set of demands on video teams. Its dynamic nature gives operators ample flexibility for content creation, and meanwhile its techniques need to make sure that the SDR-HDR-SDR round trip gives a perceptually identical result. The dedicated HDR production, distribution and display tools in Advanced HDR by Technicolor, the subject of the analysis conducted for this paper, were developed to make the most of the image quality of HDR formats.

Advanced HDR by Technicolor round-trip processing

The system has two main tools, used individually or together, to support file-based and real-time workflows. The first, Technicolor HDR Intelligent Tone Management (ITM), is a tone expansion tool used in production to up-convert SDR camera signals or existing SDR content – such as archival footage, contribution feeds or commercials – to the production’s preferred HDR format – HLG, PQ or SLog3.

The second tool, Technicolor SL-HDR, is used for content distribution and therefore works across both production and consumer environments. During production, HDR content is analysed and down-converted if needed, and standardised metadata describing that conversion is recorded to accompany the converted signal. On the consumer side, the relevant metadata is applied to reconstruct the original signal, or to adapt the content to the viewer’s display.

SL-HDR metadata makes it possible to distribute a single version of the content, in either SDR or HDR. When distributing SDR content, SL-HDR serves as a tone compression tool. ITM and SL-HDR run independently, making their own decisions dynamically to preserve the content’s artistic intent. When combined, they make the conversions reversible, resulting in a perceptually identical SDR-HDR-SDR round trip.

Since the SL-HDR metadata accurately describe the tone expansion, the parameters of the ITM tool (brightness, saturation, contrast) are always under the control of the content creator, but the process still assures that artistic intent is going to be preserved in the SDR content. www.smpte.org